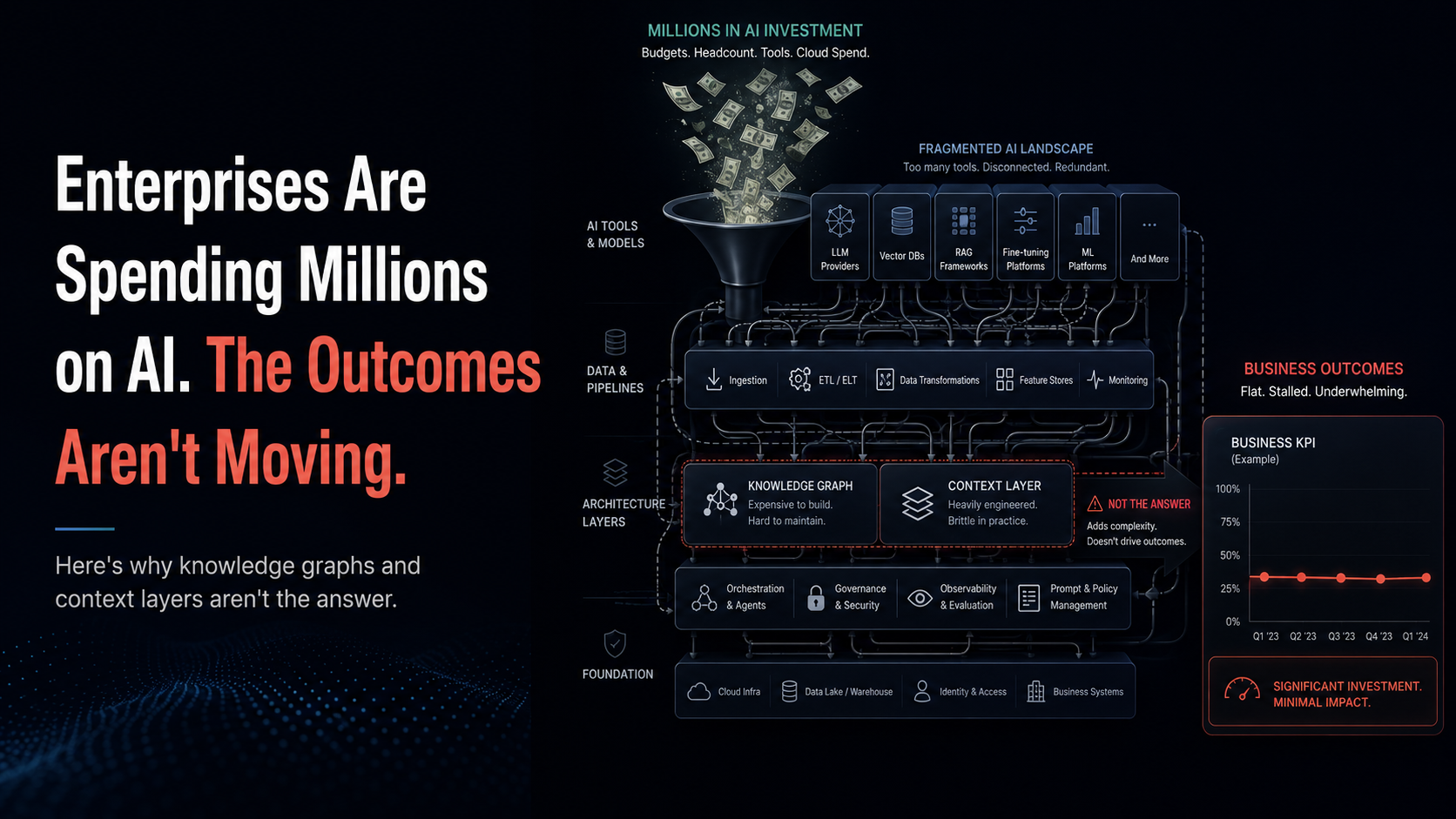

Enterprises Are Spending Millions on AI. The Outcomes Aren't Moving.

TL;DR

Enterprise AI investments are climbing, but pipeline, win rate, and deal velocity remain flat. The problem isn't the knowledge graph or context layer—it's the missing execution layer. Knowledge graphs surface insights; they can't issue marching orders. In sales, the gap between insight and execution is where ROI dies. Zime is built as an execution layer that takes the CRO's strategy, encodes it as evaluable actions, and delivers them just-in-time into the rep's workflow—compressing the typical 9-month enterprise AI ramp into 7 days.

The board deck is starting to look the same at almost every enterprise.

Slide one: AI investment, year over year, climbing. Slide two: agents deployed, copilots rolled out, knowledge graphs commissioned, context layers stood up. Slide three: pipeline, win rate, deal velocity. Flat.

The disconnect is now impossible to hide. According to IDC's 2024 AI Opportunity Study, enterprises are projecting an average ROI of $3.50 for every dollar invested in AI, but BCG's research on scaling AI tells the harder truth: only about 25% of organizations are actually realizing meaningful value at scale. Gartner predicts that more than 40% of agentic AI projects will be cancelled by the end of 2027 due to escalating costs, unclear business value, and inadequate risk controls.

The reflexive answer in 2025 was: "We need better data architecture. We need a knowledge graph. We need a context layer." So enterprises spent. Tens of millions on data unification projects. Months building knowledge graphs that ingest CRM, calls, contracts, and CPQ data. Whole platform teams stood up to maintain context layers feeding agents that, on paper, should now be smart enough to drive sales outcomes.

The agents got smarter. The pipeline didn't move.

This is the architecture problem nobody is talking about clearly enough: knowledge graphs and context layers are necessary but they are not sufficient. They surface insights. They can't issue marching orders. And in sales—where every dollar of pipeline depends on a rep doing a specific behavior at a specific moment with a specific buyer—the gap between insight and execution is where all the ROI goes to die.

The Knowledge Graph Trap: Why Insight Surfaces Without Moving Outcomes

A knowledge graph is a beautiful piece of infrastructure. It connects your CRM, your call recordings, your CPQ data, your product usage, your support tickets, and your marketing engagement into a queryable web of relationships. Ask it a question—which deals look like the ones we won last quarter?—and it returns an answer with citations.

This is genuinely valuable for analytics. It is genuinely insufficient for execution. Here's why.

1. A Knowledge Graph Can't Learn From Strategy That Hasn't Been Executed Yet

This is the cleanest example, and the one most CIOs miss until they're a quarter in.

A new CRO joins. They set a new strategy: shift the motion upmarket, prioritize a new vertical, lead with a new product line. The strategy is the right one. The KG cannot help.

Why? Because the KG learns from digital exhaust—calls, emails, deals, CRM activity. The new strategy has zero calls to learn from. Zero won deals. Zero historical pattern. The graph has nothing to ingest because the motion hasn't happened yet. The CRO's marching orders exist in their head, in a deck, in a Monday morning all-hands. The graph cannot see any of that.

Six months in, when there's finally enough data, the strategy has already drifted. The reps have improvised. The pattern the graph eventually learns is the compromised version of the strategy, not the original intent.

Zime solves this differently. Zime's Forward Deployed Engineers (FDEs) interview GTM leaders on day one—extracting strategy, intent, and marching orders before a single call is recorded. The required data sources connect, the strategy gets encoded as actions, and the system deploys to every rep the same day. Strategy doesn't have to wait for digital exhaust to catch up. It gets executed from day one, and the graph learns from execution rather than from drift.

2. Granularity Is Too Nested for Any Knowledge Graph to Configure

This is the architectural failure that kills most enterprise KG deployments, and HP is the textbook example.

HP doesn't run one sales motion. Inside HP, Poly (audio/video collaboration) sells differently from AI-PC. Inside each, enterprise sells differently from mid-market. Inside each of those, regional motions vary. Inside each of those, individual product lines have their own qualification criteria, their own competitive dynamics, their own buyer personas.

A knowledge graph can model these as nodes and edges. It cannot configure the conditional specificity—the if-this-then-that logic of which behavior matters in which context for which deal. The graph knows the relationships exist. It cannot tell a rep working a Poly enterprise deal in EMEA what their next move should be, because that requires layered conditional execution, not graph traversal.

This is where Zime's vertical model architecture takes over. Reinforcement learning on company-specific language—PAN means Palo Alto Networks, "sassy" means SASE, SASE deals close 4x faster than SD-WAN—gets layered with FDE-led configuration of the conditional specificity that governs how reps should actually behave in each motion. The system isn't trying to reason from a flat graph at runtime. It's executing a configured playbook that already encodes the conditional logic, with the graph providing reinforcement and recency.

Customer proof — Versa Networks:

When Versa Networks deployed Zime, Zime built a SASE-specific Action Engine trained on Versa's existing product release notes, sales playbooks, battlecards, customer calls, and case studies—encoded against Versa's industry, product lines, deal stages, and distribution channels. The result: a 10% lift in win rate, a 20% increase in pipeline from reduced lead wastage, and 2+ hours per week saved for every rep and manager. A flat knowledge graph could not have configured that.

3. CRO Authority Cannot Be Replicated by a Graph

This is the deepest problem and the one that explains why so many context-layer deployments produce activity without outcomes.

Reps don't follow data. They follow the CRO. When the CRO says "this quarter we are focused on multithreading at the VP level on every deal over $100K," the reps move—not because the data suggests it, but because the CRO said so and the CRO holds the comp plan, the territory, and the promotion path. Authority is the mechanism. Data is, at most, the justification.

A knowledge graph can surface the insight that multithreading correlates with closed deals. It cannot issue the order. It cannot say "this deal needs a VP touch by Friday or it goes red in pipeline review." It cannot make non-compliance visible to the manager. It cannot make compliance reinforce itself across the team. It produces a dashboard. The CRO produces movement.

Zime is architected around the recognition that the system has to operate as an extension of CRO authority, not as a parallel insight surface. The compounding knowledge graph is seeded with marching orders—the CRO's strategy, encoded as evaluable actions—and grows from there with every win, loss, and rep behavior. Human-in-the-loop approvals keep the strategy current as the CRO updates direction. The result is a system that doesn't just observe what happens; it carries the CRO's voice into every rep's daily workflow.

Customer proof — SonicWall:

SonicWall used Zime to make sales behaviors observable, coachable, and enforceable across their team. After deployment, reps making clear end-of-call asks rose by 30–40%—a behavior the CRO wanted but couldn't enforce at scale through dashboards alone. Based on the rollout's success, SonicWall expanded from 40 to 85 Zime licenses.

The Real Architecture Question: Insight vs. Execution

Most enterprise AI strategies are currently structured around two layers: the data layer (warehouses, lakes, CRM systems of record) and the intelligence layer (knowledge graphs, context layers, agents). The implicit assumption is that good data plus good intelligence equals good outcomes.

For sales, that assumption is wrong. There's a missing third layer: an execution layer that takes the intelligence and turns it into specific marching orders that reach specific reps inside their existing workflow at the specific moment they can act.

Without the execution layer, a KG-powered agent can tell a rep, accurately and with citations, that the deal looks at risk. It cannot tell that rep what to do about it. It cannot make that recommendation appear inside the rep's Slack 30 minutes before their next call. It cannot evaluate whether they actually did what was recommended. It cannot make the manager aware if they didn't.

This is the difference between observation and execution, and it is the difference between AI investment that returns and AI investment that gets quietly written off.

Zime is built as an execution layer. Insights from the underlying graph become just-in-time Actions delivered into the rep's existing workflow—Slack, Teams, the CRM. Each action is evaluable, so managers can coach to evidence rather than instinct. The graph compounds in the background, but the unit of value is the behavior change at the rep level, not the dashboard at the manager level.

Customer proof — Bureau:

Bureau, a no-code identity decisioning platform, deployed Zime to embed pre-call action checklists directly into the rep's existing messaging app. The result was a 30% increase in deal conversions from improved discovery and checklist adherence, plus an hour saved per rep per day. Ranjan Kumar, Founder & CEO of Bureau, said: "We increased our ARR by 10% with Zime seamlessly getting our product releases to our sales team in the form of just-in-time Actions." The graph alone wouldn't have moved the number. The execution layer did.

9 Months to 7 Days: Why Time-to-Impact Is the New ROI Metric

The standard enterprise AI deployment timeline looks like this: 3 months to scope, 3 months to build the knowledge graph, 3 months to integrate the agent layer, then a long tail of fine-tuning while the team waits for outcomes. By the time the system can produce its first useful guidance, most CIOs are already preparing the awkward "we need more time" memo for the board.

Zime's vertical model architecture compresses this dramatically. FDEs interview the GTM leaders on day one and extract the strategy directly. Required data sources connect over the following days. Reinforcement learning on company-specific language and the conditional specificity of the motion gets configured in parallel. The compounding knowledge graph gets seeded with the CRO's marching orders, then grows organically with every subsequent win, loss, and rep behavior.

Typical enterprise ramp: 9 months. Time to impact with Zime: 7 days.

This isn't a minor efficiency gain. It's a different ROI calculation. The CFO asking when the AI investment will return doesn't have to wait three quarters to find out. The CRO testing a new motion doesn't have to wait six months for the system to catch up. The CIO presenting to the board can show measurable outcomes in the same quarter the project launched.

What This Means for Enterprise AI Strategy

Three implications for executives evaluating where the next dollar of AI investment should go:

1. The KG is plumbing. Stop treating it as the strategy.

A knowledge graph is necessary infrastructure, but on its own it does not produce sales outcomes. If the deployment plan ends at "stand up the context layer and connect it to a copilot," the deployment will produce activity without revenue movement. Budget the execution layer separately and make it the gating dependency for the agent layer.

2. Time-to-impact should be the headline metric, not model quality.

A perfectly tuned graph that takes 9 months to deploy is worth less than an execution layer that moves outcomes in 7 days. Evaluate vendors on how fast their system can carry the CRO's strategy into the rep's daily workflow, not on how elegant the underlying intelligence is.

3. Authority and execution have to be designed into the architecture.

The system has to operate as an extension of CRO authority, not as a parallel observation tool. That means seeding the system with strategy on day one, encoding marching orders as evaluable actions, surfacing those actions inside the rep's existing workflow, and making compliance visible to managers. Anything else is a dashboard with extra steps.

The customer evidence is consistent on what happens when the architecture is right:

•

SonicWall: 30–40% improvement in reps making clear end-of-call asks, expansion from 40 to 85 licenses

These aren't gains from "AI in general." They aren't gains from a knowledge graph alone. They're gains from a system that took the CRO's marching orders and turned them into behaviors that happened, in workflow, with evidence, in days rather than quarters.

The Real Question

The enterprise AI conversation right now is dominated by infrastructure questions: which model, which graph, which context layer, which agent framework. These are the wrong questions, or at least the incomplete ones.

The question that actually predicts whether the AI investment will return is "can our system issue and enforce marching orders, or does it just surface insights?" If the answer is the latter, the deployment will produce activity without outcomes—which is exactly the pattern showing up in board decks across the enterprise right now.

Knowledge graphs surface what happened. Context layers organize what's known. Neither of them executes. The companies whose AI investments are about to return are the ones building or buying the execution layer that sits on top—the layer that translates strategy into behavior, behavior into evidence, and evidence back into strategy.

That's the layer Zime is built to be. And it's the reason the typical 9-month enterprise AI ramp can collapse into 7 days of time to impact.

The agents are not the strategy. The graph is not the strategy. The execution layer is.

See how Zime turns marching orders into measurable behavior in 7 days

Related Resources

External sources cited: IDC AI Opportunity Study (2024); BCG "Where's the Value in AI" research; Gartner agentic AI forecast (June 2025); Salesforce State of Sales (2024); HubSpot State of Sales.