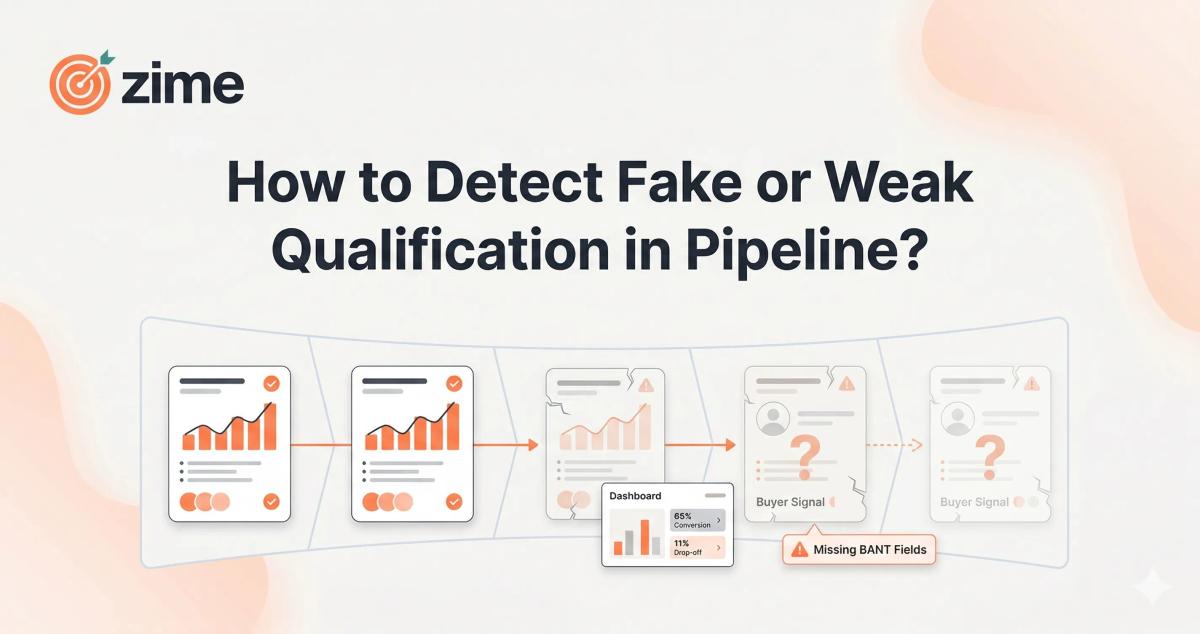

How to Detect Fake or Weak Qualification in Pipeline?

- Fake qualification is an activity problem, not a pipeline volume problem. Deals advance because reps update fields, not because customers demonstrate genuine buying intent. Stage labels are not the same as qualification evidence.

- The real gap is visibility at the behavior level. Managers can only review rep-reported summaries, making it nearly impossible to distinguish truly qualified deals from optimistically labeled ones, especially at scale.

- Common fixes like MEDDIC training, updated playbooks, and call recording tools fail because they address knowledge, not behavior. More data entry fields produce more inaccurate data, not better pipeline health.

- Detection requires behavioral exit criteria, not stage labels. Each deal stage should require conversation-verified signals: customer-stated consequences of inaction, confirmed timelines, and evidence of real urgency pulled directly from call transcripts.

- The fix starts with your own wins. Auditing closed-won call data to build qualification benchmarks, then inspecting deals against those criteria instead of stage status, is what separates reactive pipeline reviews from proactive revenue execution.

How Do I Detect Fake or Weak Qualification in Pipeline?

There is a specific tension playing out inside most revenue teams right now. The pipeline is full. The stages are populated. But when the forecast call happens, the numbers do not hold. When quarter-end arrives, deals that looked certain stall, discount, or disappear. The question sales leaders are actively working through is not whether they have enough pipeline. It is whether the pipeline they have is real.

Fake or weak qualification is not random. It follows a predictable pattern: deals advance because reps updated a field, not because customers demonstrated genuine buying intent. Detecting it requires moving from stage labels to behavioral evidence, from rep-reported status to conversation-verified signals.

Who This Is Really About

This problem is sharpest for sales leaders managing growth-stage B2B SaaS teams, particularly those running:

- High-velocity inbound models with 6 to 15 reps handling 3 to 4 new conversations per day

- Organizations that have deployed MEDDIC, BANT, or a custom qualification framework but see inconsistent execution across the team

- Sales managers spending 30 minutes per rep per week in pipeline reviews, trying to find the deals worth coaching

- Revenue leaders preparing for QBRs with forecast numbers they do not fully trust

These teams are not lacking process. They have playbooks. They have CRM. The problem is that neither system can distinguish between a deal where the customer confirmed a specific, high-urgency business pain versus one where the rep typed "pain identified" in Salesforce and moved on.

The Real Problem

At the rep level, it shows up as stage advancement without exit criteria being met. Discovery is marked "complete" because the call happened. Pain points are logged as generic categories rather than specific customer statements tied to business impact. Timeline fields stay blank, or close dates are set optimistically with no customer-anchored event behind them.

At the manager level, the problem surfaces during pipeline reviews. Managers rely on rep-reported summaries. They inspect outcomes and deal stages, not behavior. There is no reliable way to tell whether a given deal was genuinely qualified or simply labeled that way.

At the leadership level, this compounds into forecast misses that no one saw coming. According to Gartner, poor CRM data hygiene is one of the leading reasons forecasts miss by more than 10%, and improving it can increase forecast accuracy by up to 30%. The underlying issue is structural: teams define what qualification means but never build a mechanism to verify it at scale.

What Is Actually Causing This

1. Qualification is treated as a one-time event, not a continuous standard.

Most teams qualify once, at the top of funnel, and carry that signal forward. Buyers change their minds. Urgency fades. A deal that was legitimately qualified three months ago may now be dead weight that no one wants to call out.

2. CRM data reflects labels, not evidence.

When a rep marks "Pain Identified," the system records that label. It does not record whether the customer said "this problem is costing us $400,000 annually" or merely mentioned a vague inconvenience. Forrester research finds that sales reps waste 67% of their time on deals that will never close, largely because of this kind of surface-level qualification. A 2025 Validity study found that 76% of CRM users report that less than half of their organization's CRM data is accurate.

3. Managers inspect status, not behavior.

Pipeline reviews focus on stage, close date, and deal size. They rarely ask: what specific language did the customer use to confirm urgency? Was a timeline anchored to a real business event? What objections came up, and how were they handled? Without behavioral inspection, pipeline reviews become status theatre.

4. Winning behaviors never become shared standards.

What separates a deal that closes from one that stalls is rarely documented. Top rep behaviors stay locked in individual performance. The result: qualification criteria live in a static playbook while actual patterns of winning evolve in the market without ever updating the framework.

What Sales Teams Usually Try First

The standard response when leaders recognize weak pipeline is to pull familiar levers. They run a MEDDIC certification. They update the playbook. They add qualification fields to Salesforce or HubSpot. They require a deal scorecard before advancing a stage. They invest in call recording platforms, expecting that recorded conversations will give managers the visibility they have been missing.

These responses are logical. They address the right general problem. Teams invest in them in good faith.

Why These Approaches Fail

Training solves for knowledge but it does not reliably change behavior at the deal level. A rep can pass a MEDDIC certification and still run a discovery call that produces no actionable qualification signal. Research shows that 79% of marketing leads never convert to sales, largely because qualification frameworks are understood in theory but applied inconsistently at the frontline.

Generic call intelligence tools surface transcripts. What they cannot do is evaluate whether a specific call met your qualification standard for your specific ICP. A tool that flags "pain point mentioned" is not the same as one that distinguishes between a customer expressing a strategic business consequence versus noting a minor operational inconvenience. That nuance determines whether a deal converts.

Adding CRM fields creates a data entry burden that reps deprioritize. Industry research shows that 79% of opportunity-related data gathered by sales reps is never entered into CRM due to manual entry friction. More fields do not produce better data without a system that populates them from verified, conversation-level sources.

What Actually Drives Behavior Change

The shift that effective teams make is from measuring what reps report to measuring what conversations reveal.

This means using behavioral evidence from actual calls to determine whether qualification criteria were met, not relying on what the rep entered after the fact. It means scoring deals at each stage based on customer-expressed signals: specific language confirming urgency, stated consequences of inaction, confirmed timelines tied to real business events.

It also means building qualification standards from your own winning deals. What did your customers say in the discovery calls that preceded your top closed-won outcomes? Those patterns, derived from your actual conversations with your actual ICP, are your real qualification criteria. Not a generic framework applied uniformly, but your version of it, verified by the evidence of what actually closes.

What Sales Leaders Are Actually Saying

Suraj Ramesh manages a sales team at Sprinto, a B2B compliance SaaS company helping businesses achieve SOC2 and ISO certifications. He runs an inbound-heavy model with 8 reps handling 24-plus new conversations daily. His team operates at high velocity, where qualification consistency is both critical and difficult to enforce. Describing the core visibility gap he encounters in pipeline reviews:

"A deal has activity, so it is qualified. But what if that activity means nothing? These are two very different things."

On the practical impossibility of deal-level inspection when managing a volume-intensive team:

"I have eight reps and twenty-four calls a day. When I do a weekly pipe review, I cannot look at every single deal. It is really not possible."

A Practical Framework to Detect Fake Qualification

Step 1: Define behavioral exit criteria for each stage.

Replace stage labels with observable standards. A deal should not advance past discovery unless the transcript contains customer-stated pain with a specific business consequence and a timeline confirmed by the customer. Vague labels like "Qualified" or "Active" are not criteria.

Step 2: Score qualification from conversation evidence, not rep-reported fields.

Use call recordings or AI-assisted transcript analysis to verify whether behavioral criteria were met. This removes reliance on self-reporting and gives managers a factual, deal-specific basis for coaching conversations instead of relying on optimistic summaries.

Step 3: Segment pipeline by qualification signal strength.

Separate deals into three buckets: those where exit criteria were met and customer engagement is active; those where criteria were met but engagement has dropped; and those where criteria were never actually met. Each requires a different action. Treating all three the same is how pipeline bloat compounds.

Step 4: Build qualification benchmarks from your closed-won deals.

Audit the last 20 to 30 closed-won deals. What customer language appeared consistently in early-stage calls? What questions did your top reps ask that produced clear qualification signals? Use these patterns to calibrate what genuinely qualified looks like for your team and ICP.

Step 5: Shift pipeline reviews from status to criteria.

Change the central question in pipeline reviews from "what stage is this deal in?" to "what specific evidence exists that this deal meets our qualification standard?" This one shift transforms a status update into a diagnostic coaching conversation.

If You Are Facing This Problem

Use these questions to locate where weak qualification is entering your pipeline:

- Can your reps articulate the specific business consequence the customer described, or only the general category of problem?

- Do your pipeline stage definitions include observable behavioral criteria, or only activity checkboxes?

- When you review a stalled deal, do you have access to what was actually said on the discovery call?

- What percentage of your pipeline has had no customer-initiated activity in the last 30 days?

- When a deal is marked qualified, what specific evidence exists in your CRM beyond a rep-updated field?

- Are your top-performing reps qualifying differently from average reps, and do you know exactly what they are doing differently?

- Do managers coach reps on what questions to ask, or only review outcomes after deals are already lost?

Conclusion

Detecting fake or weak qualification in pipeline is not primarily a data volume problem. It is a verification problem. The signal already exists inside your call recordings and customer conversations. Most teams have simply not built the operational mechanism to surface it consistently and act on it before the damage reaches the forecast.

The core shift is from trusting what reps record to verifying what conversations reveal. Sales leaders who make this shift stop debating whether their pipeline is healthy and start having specific, evidence-based conversations about which deals to advance, which to coach, and which to close out. That discipline, applied consistently, is how forecast accuracy improves and win rates follow.

See How It Works in Practice

If pipeline qualification gaps are compressing your forecast confidence and making coaching conversations reactive rather than proactive, it is worth seeing how teams are making deal-level behavioral signals visible in real time.

Zime is built to operationalize qualification from your own winning conversations, score deals by behavioral evidence at each stage, and give managers a way to inspect and coach to criteria rather than status. Rather than describing how it works, the most useful thing is a working walkthrough of how this looks across your actual deal types and sales motion.

Request a Demo with Zime