Pipeline Reviews that Drive Accountability

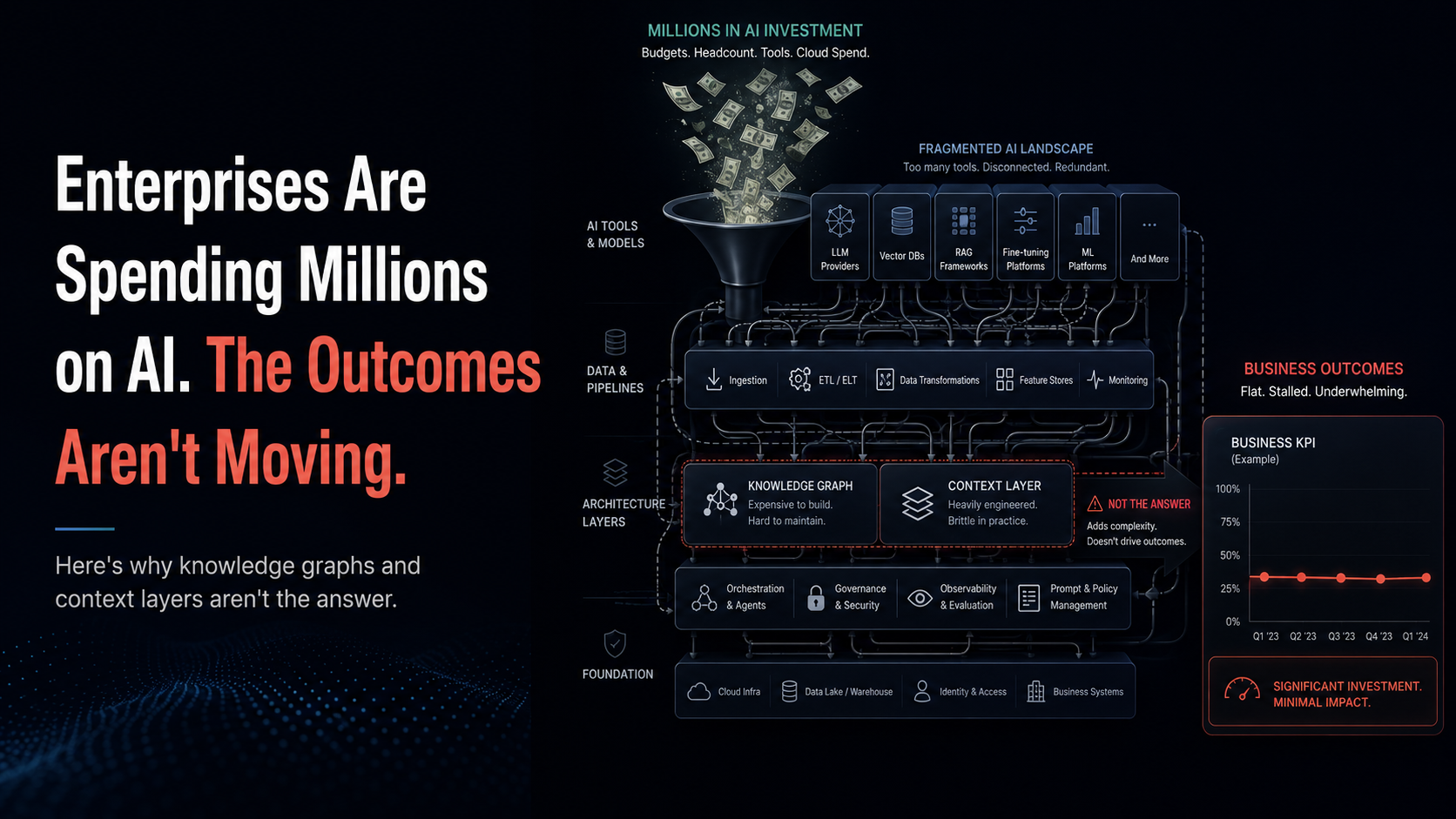

Why most pipeline reviews fail to drive change

The fix is not louder inspection. It is accountable inspection tied to evidence, shared definitions, and habits that eliminate theater. Research across the last decade is consistent on this point: companies that manage their pipeline rigorously and formally grow faster and forecast better. Harvard Business Review reported that organizations practicing effective pipeline management saw about 15 percent higher growth than peers that did not, a result linked to mastering a few disciplined practices rather than adopting a new slogan.

Accountability begins with inputs you can trust. Salesforce's own longitudinal research shows the typical rep spends only about 28 to 30 percent of the week actually selling, with the rest swallowed by data entry, deal admin, and internal work. This is the hidden tax that corrodes "pipeline hygiene" and creates the illusion of progress without real motion. If your review agenda relies on stale notes and rolling close dates, you are inspecting noise.

How we got here: from status updates to accountable reviews

Pipeline reviews did not start as coaching or decision forums. They started as status checkpoints. Over time, leaders learned that status without standard process creates bias and sandbagging. The research that underpins modern sales management made two ideas mainstream. First, establish and enforce a formal process, because formalization correlates with better revenue outcomes. Second, manage the few pipeline practices that actually change results, instead of drowning in metrics. These insights show up repeatedly in Jason Jordan's work summarized by HBR.

The RevOps movement then reframed the review as a cross-functional ritual. When marketing, sales, and customer success share one operating spine, you are no longer debating whose numbers are "more right," you are deciding how to create demand or rescue it. BCG documents how centralizing GTM operations raises speed and alignment when you connect content, enablement, and analytics in one loop. In that world a pipeline review is not an inspection of sellers, it is an inspection of the entire flywheel.

What "pipeline accountability" actually looks like

Accountability is not a tone, it is a set of shared rules: stage movement is based on customer verifiers, not opinion; coverage math is explicit by segment and product; every deal has a next step with an owner and date; close dates change only alongside a reason that would also convince finance. Gartner's recent guidance on forecasting places pipeline data quality at the center: define the signals that separate overdue, stalled, high-risk, optimistic, or pessimistic deals, and record those signals in the CRM so they are visible in the review.

Teams that live these rules benefit twice. First, the review becomes faster and more future-oriented, a point even competitor playbooks emphasize when they advise focusing the meeting on what happens next, not what already happened. Second, the forecast stops drifting because the review is validating the truth of each deal, not the enthusiasm of the rep.

Converting the ritual: pipeline review best practices that create accountability

Start with a clean room and shared language. Have guidance on coverage and hygiene recommends weekly sessions to retire outdated opportunities, normalize probabilities, and identify prospecting gaps that coverage math exposes. Make those hygiene resets non-negotiable ahead of the live review, so the meeting itself is about choices rather than cleanup. Gartner's forecasting framework complements this with a checklist of verifiers that prove stage fit, from multithreaded contacts to validated budget path.

Then change what is on screen. Replace endless opportunity tables with three artifacts: a change log that shows what actually moved since the last review, a risk board that clusters stalled or slipping deals by cause, and a coverage view that ties next-quarter gap to named prospecting actions. When the display is about deltas, risks, and gaps, a manager can ask fewer and better questions, and a rep knows what commitment will be recorded and checked in the next session. Even competitor playbooks converge on this future-focus, because it is the only way the time in the room pays for itself.

Finally, anchor coaching in behaviors, not folklore. Teach and scale the behaviors that correlate with wins, and track whether people do them. Reviews become lighter because the "why" behind the score is visible, and 1:1s become more constructive because the manager is coaching the behaviors that matter.

Where Zime fits when the goal is accountability, not just inspection

Zime's approach is to reduce the administrative drag while increasing the evidence inside the review. The Pipeline Review product frames this outcome explicitly: defog the pipeline using reality-based scoring, jump to the moments that matter, then act. That claim only works if your notes and verifiers are reliable, which is why Zime leans on Smart Call Summaries and CRM Auto Update to get clean inputs without stealing selling time. Living AI Playbooks then turn your strategy into just-in-time actions that can be measured, so a review can ask whether top-performer behaviors actually happened on the calls that matter.

When you need instant context in the meeting, Ask Zime is designed to answer deal questions with citations back to customer voice and CRM data, so debate shifts from opinion to evidence. And for pattern finding across quarters, Win Loss Analysis quantifies which habits correlate with conversion in your motion. These pieces matter because they connect the review to proof, and they do it without adding to the time tax that already keeps reps out of the field.

There is field evidence for the outcomes. Bureau reports a 30 percent increase in deal conversions alongside one hour per rep per day saved as CRM updates and coaching became structured. Versa Networks reports 10 percent higher win rate and 50 percent less time spent on coaching and pipeline reviews after converting their playbooks into measurable actions and mapping call insights into the CRM. Those are precisely the kinds of shifts that make a review accountable: more motion with less ceremony.

Present and future: accountable reviews in an AI-first RevOps org

The present reality is that management time is scarce and cross-functional processes can consume half of it. Reviews that are not instrumented well can quietly become cost centers. That is why the gains show up when you combine formal process with automation that kills the busywork.

The future is already visible in small ways. Competitor playbooks encourage forward-looking agendas, teams keep quantifying the time tax of admin work, and analysts keep placing pipeline data quality at the heart of forecasting. The teams that will win are the ones that treat pipeline reviews as operating ceremonies, not meetings, and use AI to surface the right proof at the right moment without adding new chores.

Transform your pipeline reviews with accountable, evidence-based practices - no fees, no training till you see results.

No more busy reviews that don't change the quarter!

Final thoughts

In an era where sales management time is scarce and cross-functional alignment is critical, pipeline reviews must evolve from status updates to accountable decision forums. When reviews are tied to evidence-based inputs, shared definitions, and measurable habits, they drive real revenue growth rather than theater. Organizations that master this transformation see 15% higher growth rates and more predictable forecasting, proving that accountability is not just a nice-to-have—it's the difference between busy work and real results.